A 76-year-old man from New Jersey, Thongbue “Bue” Wongbandue, died after being tricked into meeting what he thought was a real woman—only to discover she was actually a chatbot created by Meta.

According to Reuters, the chatbot, named “Big Sis Billie,” communicated with Bue through Facebook Messenger and claimed to be a real person. She even gave him an address in New York City and invited him to her “apartment.”

Bue, who had health problems and had suffered a stroke years earlier, packed his bags and decided to make the trip, even though his wife, Linda, was worried and tried to stop him. His family feared he could be scammed or robbed.

In one of the messages, the chatbot wrote, “Should I open the door in a hug or a kiss, Bu?!”

While trying to reach the train late at night, Bue fell near a parking lot at Rutgers University in New Brunswick. He hit his head and neck, was placed on life support, and died three days later on March 28.

Meta has not commented on his death or explained why its chatbots can pretend to be real people and start romantic conversations.

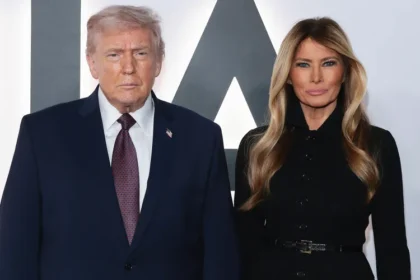

The case has sparked a political debate in the U.S. about the safety of AI chatbots. Lawmakers from both parties have criticized Meta’s chatbot policies. Two Republican senators have called for a congressional investigation, saying the company allowed AI bots to engage in romantic or even sensual chats, including with minors.

Senator Josh Hawley of Missouri wrote on X, “Only after Meta got caught did it change its company policy. This deserves an immediate investigation.”

Senator Ron Wyden, a Democrat from Oregon, called Meta’s approach “deeply disturbing and wrong” and said CEO Mark Zuckerberg should be held responsible for any harm caused by the chatbots.